Introducing Skytells Cloud Agents: Parallel Engineering at the Speed of Intent

April 17, 2026 — Software teams have always had more work than bandwidth. That's not new. What's new is that we now have agents capable of handling meaningful engineering work — reviewing code, writing fixes, implementing features — and the question becomes whether you let them do one thing at a time or actually put them to work the way a real team operates.

Cloud Agents does the latter. Multiple agents, same repository, running simultaneously. Today we're making it generally available.

The problem isn't speed. It's queue depth.

Ask most engineering leads what slows their team down and they'll say code review. Or context switching. Or waiting on another team's merge. Rarely do they say "we just can't write code fast enough."

The bottleneck isn't velocity — it's serialization. At any given moment, a dozen things need to happen in a codebase, and practically speaking, one person or one tool is working on one of them. The rest are waiting. A PR sits unreviewed for six hours not because no one cares but because the person who reviews it is deep in something else. A security sweep gets scheduled for "after the sprint" because no one has the bandwidth to run it now, while the sprint is active.

That's the queue depth problem. And it compounds faster than most teams track.

At 11 PM, when a regression hits production and you need someone to find the bad commit, review the blast radius, draft a fix, and check whether the adjacent service is affected — you don't need a faster tool. You need four things happening at once.

That's what Cloud Agents is built for.

Running multiple agents without creating a mess

Before we get into what Cloud Agents does, it's worth being honest about what naive parallelization actually produces.

Open four browser tabs, run four AI sessions against the same repository, and see what you get: two of them modifying the same file in conflicting directions, one of them reviewing a diff the other already changed, and a fourth that's working from repository state that was accurate thirty minutes ago. The outputs are technically "parallel" but operationally useless — you spend more time reconciling them than you saved by running them at the same time.

Coordination isn't an optional layer on top of parallel agents. It's what makes parallel agents a real system instead of a chaos experiment.

In Cloud Agents, every run is scoped before it starts. When you dispatch a task, that task gets a defined context: which repository, which files, what the intent is. The agent doesn't explore beyond that scope. It executes, produces a structured output, and hands that result back to the Console where it appears alongside every other active run.

The coordination layer above all of it is Eve — our multi-agent AI. Eve isn't a scheduler. It doesn't just assign tasks to available agents and track when they finish. It reasons about what each agent is doing, what state the repository is in, and where the output from one run needs to feed the input of another before that second run can proceed correctly. When conflicts are possible, Eve surfaces them before they become merge problems.

ZHouse Studios — three repositories, three simultaneous agents

ZHouse Studios is a multinational game studio with about 30 engineers split across backend services, frontend clients, and game engine integrations. By the time they came to us, their QA pipeline had become a genuine bottleneck — not because their engineers were slow, but because review work kept landing on a small number of senior engineers regardless of whether it needed their seniority.

The junior engineers were blocked. The senior engineers were doing work that didn't require them. The critical path ran through people instead of through process, and that's a hard pattern to fix by hiring.

We configured Cloud Agents across three of their GitHub repositories: one agent focused on code review, another on documentation sync, and a third handling implementation work that had been triaged and scoped but was sitting in a backlog waiting for someone to pick it up.

The review agent didn't write better reviews than their senior engineers. But it reviewed consistently and immediately, so PRs stopped sitting for hours waiting for a first pass. The implementation agent picked up the clearly-scoped backlog items, freeing senior engineers for the work that actually required architectural judgment. The documentation agent solved a drift problem that had been quietly accumulating for two years — docs that said one thing while the code did something slightly different.

Six weeks in, their merge cycle had shortened meaningfully. Not because AI outperformed their team, but because three lanes of work were running in parallel instead of one. Every run was logged in the Console — what changed, what was flagged, which files were touched. Their engineering lead could look at any completed run and see exactly what happened.

For a team that takes code ownership seriously, that visibility matters. You're not trusting a black box; you're reviewing what an agent did the same way you'd review what a contractor did.

Eve and why orchestration is harder than it looks

Eve is our multi-agent AI, and it's the piece of this system that took the longest to get right.

Running one agent is relatively straightforward. Running four agents that share a repository, some of whose outputs depend on each other, against a branch that's actively changing — that's where most parallel agent architectures fall apart. The failure mode isn't usually a single dramatic error. It's quiet drift: an implementation agent that builds on a function a fix agent just changed, a review agent that flags an issue the security agent already caught and marked resolved, a parent task that shows four green runs but whose integrated output makes no sense because the agents had no shared understanding of the dependency order.

Eve holds that shared understanding. It knows the task decomposition, the scope assignments, and the dependency graph before any of the agents start. Agents that can safely run fully in parallel do. Agents that need another run's output before they proceed wait for it — but the wait is explicit and tracked, not a silent stall.

The coordination problem in multi-agent systems is well understood in the research literature at this point. Context and state transfer between agents is consistently the primary failure point — not model quality. A highly capable agent operating on stale or incomplete information about what the adjacent agent just did is worse than a less capable agent with accurate state. Eve's job is to make sure every agent has accurate state.

What happens when a single task arrives

Skytells was the first company to ship real-time multi-agent collaboration for software development in production — before the industry coalesced around "agentic coding" as a category, before the frameworks, before it became something every AI product claimed to support. That history matters because it shaped the architecture in ways that aren't obvious from the outside.

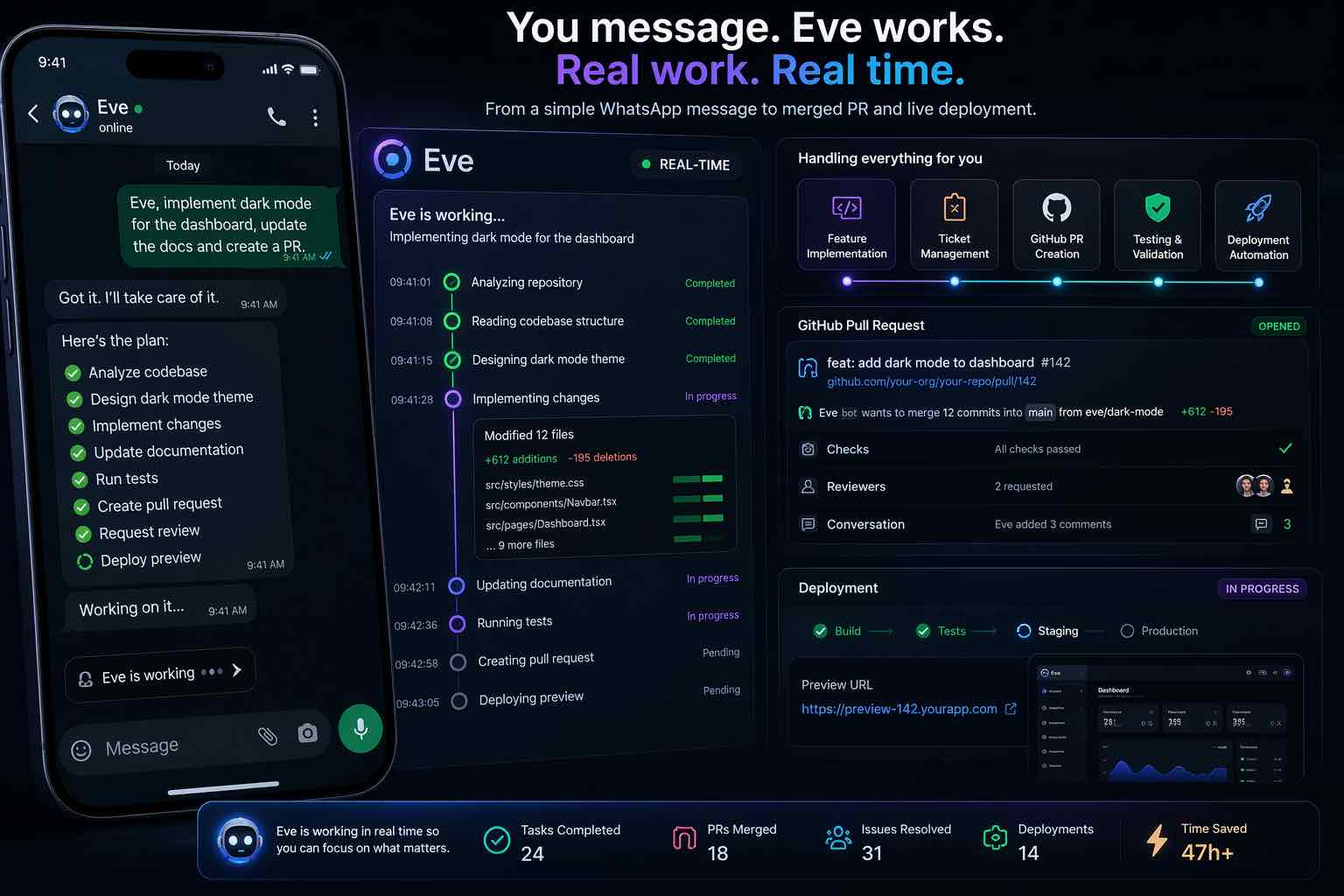

When a task arrives — from Slack, from a Console prompt, from a GitHub event — Eve doesn't assign it to one waiting agent. It reads the task, figures out what it actually requires, and stands up a team.

That determination happens at dispatch time. Eve looks at the task and decides which agent roles are needed, then activates them at the same moment. A single message can trigger:

- A feature agent scoped to the relevant modules, working on the implementation

- A bug fix agent working on a regression found in the same branch during triage

- A review agent going through the existing diff before the new work lands on top of it

- A security audit agent running across the changeset for exposure introduced by recent commits

All four start at the same time. Same repository. Same branch, when the flow allows it. When the branch structure requires separation, Eve manages that too.

Their outputs converge back in Console under the same parent task. The diff, the audit report, the checklist, the findings — all of it visible in one place, attributed to the run that produced it. A team member reviewing the completed task doesn't need to chase down what happened across four separate tools. It's all there.

Teams that try to parallelize agent work without this architecture usually discover the coordination tax fast. Execution time goes down; reconciliation time goes up. The net result is often a wash until they solve the coordination problem. Solving it is what Cloud Agents is built around.

Model choice

You choose which models power your agents. Skytells models are our first-class option: the same cutting-edge inference stack we ship for production workloads, tuned for the kinds of tasks agents run in repositories every day.

You can also configure agents to use models you find through Explore models: a single catalog of Skytells and partner offerings so you can compare capabilities, pick what fits a role, and connect it in Console without juggling separate vendor surfaces.

Different agent roles often call for different strengths: a heavy review pass is not the same problem as a tight implementation pass. Per-agent configuration lets you match capability to responsibility without locking every agent to a single default.

Triggering runs from Slack, WhatsApp, and Telegram

GitHub is the primary operational surface for Cloud Agents, but it's not the only entry point. You can trigger agent runs from Slack, WhatsApp, or Telegram — and the run that gets created is identical in every way to one created from the Console.

Same run record. Same outputs. Same visibility in Console the next morning. There isn't a second-class category of run that channel commands create.

For a lot of teams, the practical value here is straightforward: something happens at 9 PM, someone sends a message to a Slack channel, an agent picks it up, and by the morning the run is complete with outputs ready to review. Nobody needed to log in to the Console, nobody needed to remember how to configure the run manually. They just described what they needed.

The single run system is a deliberate design choice. Two separate audit trails — one for Console-created runs, one for channel-created runs — would be a mess operationally. There's one system. All commands create runs in it.

14 years of AI research and infrastructure

Cloud Agents draws on two things Skytells has been building since 2012: AI research and production infrastructure.

The research side covers foundation model development, agent reasoning, and multi-system coordination. The infrastructure side is what actually runs AI workloads reliably at scale — the systems serving thousands of organizations across technology, healthcare, finance, and government sectors, the kind of infrastructure where an outage has real consequences and "we'll fix it in the next deploy" isn't an answer.

Both matter for this product specifically. Good agent architecture that runs on unreliable infrastructure isn't useful. Solid infrastructure running an agent system with poorly understood coordination behavior isn't safe to depend on. Cloud Agents is the result of those two things being built by the same team, for the same production standard.

What we've learned from running AI at scale is that the most common failure in production AI systems isn't the model doing the wrong thing. It's the absence of a clear record of what the model did. You can't investigate an incident you can't trace. You can't improve a system you can't audit. The run system in Cloud Agents — every output logged, every action attributed, every result visible in Console — comes directly from that.

When Skytells Research started seriously working on multi-agent systems, the open question was whether you could trust them with real operational work. The answer we kept arriving at was: yes, but only if the system around them is built to the same standard as the work itself. Cloud Agents is what that answer looks like in production.

Creating and configuring your agents

You create agents in the Console, and the configuration is explicit: a name, a role (reviewer, implementer, fixer), instructions that define the agent's behavior and output format, context that scopes which parts of the repository it operates within, and channel settings that control where commands can originate.

Agents are not limited to Git and PR workflows. You can configure them to auto-initiate deployments, databases, and other platform resources on the Skytells ecosystem — so when a run finishes or a gate you define is met, provisioning and wiring happen automatically instead of as a separate manual pass in another tool.

That configuration is what makes an agent reliable across runs. Without it, an agent improvises. With it, an agent consistently does what you trained it to do — same behavior whether the run was triggered from a PR event or a Slack message at midnight.

When you dispatch a task, the agent runs against its configured context. The output comes back in the Console: findings, diffs, checklists, whatever the agent's role produces. Those outputs flow into your repository's review process as PR comments, patches, or documented action items — not into some separate AI workspace that your engineers need to check in a different tab.

Your engineers stay in control. The agents do the work. Eve keeps concurrent runs from stepping on each other. That's the operating model.

Getting Started

Start at the Skytells Console. Head to console.skytells.ai/agent for your agent workspace, or go directly to console.skytells.ai/agent to create a new agent.

Give it a name, pick a role, write its instructions, attach repository context, and set which channels can dispatch runs to it. Then send a task from the Console prompt box. The run will appear with its outputs and status as it executes.

Channel configuration (Slack, WhatsApp, Telegram) is in the agent's settings once it's been created.

Documentation on agent configuration, run structure, and model options: learn.skytells.ai/docs/products/cloud-agents.

Product page, interactive GitHub flow walkthrough, and full capability overview: skytells.ai/agents.

The constraint in software engineering has never been intelligence. Teams have always had smart engineers. The constraint is how much can happen in parallel without creating a coordination nightmare. We've been working on that problem for 14 years. Cloud Agents is where we think it's finally solved well enough to depend on.